I started to write a post that incorporated some aspects of basic statistics and it quickly blew up out of control. So, I’ve decided to break it up into a few smaller posts. This one is focused on calculating the probability of a simple outcome.

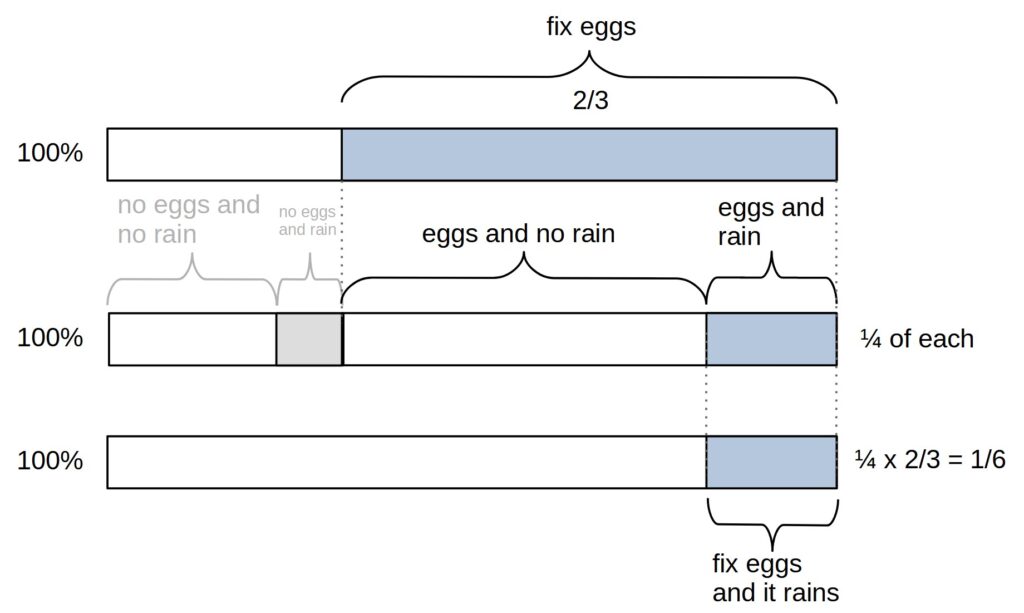

If two events are independent of each other, we can multiply the probabilities together. If you fix eggs for breakfast 2/3rds of mornings and it rains later in the day 1/4th of the time and whether you fixed eggs or not and it rains or not has no influence on each other (e.g., feeling like it might rain doesn’t cause you to be more or less likely to cook eggs) then we can calculate the probability of both occurring on a given day by multiplying the probabilities together. ![]() or about 17% of the time (1/4th of the 2/3rds). So, on average, a little more than one day a week, it will rain and you cooked eggs for breakfast. (Given some data we could also test if these indeed appear to be independent of each other or not by deviations from 1/6.)

or about 17% of the time (1/4th of the 2/3rds). So, on average, a little more than one day a week, it will rain and you cooked eggs for breakfast. (Given some data we could also test if these indeed appear to be independent of each other or not by deviations from 1/6.)

Since all outcomes have to, by definition, add up to 100%, we can also calculate the probability that you did not (fix eggs for breakfast and it rained) by subtracting from one. ![]() or about 83% of the time. I placed these in parentheses to indicate that both did not happen together—English can imply different meanings like you did not fix eggs and it did rain, which would be

or about 83% of the time. I placed these in parentheses to indicate that both did not happen together—English can imply different meanings like you did not fix eggs and it did rain, which would be ![]() or about 8.3% of the time or that you did not fix eggs and it didn’t rain, which is

or about 8.3% of the time or that you did not fix eggs and it didn’t rain, which is ![]() or 25%—you have to be careful about details when converting language statements into mathematical calculations, but it is possible and rather than being seen as a hindrance can actually help to understand math and language.

or 25%—you have to be careful about details when converting language statements into mathematical calculations, but it is possible and rather than being seen as a hindrance can actually help to understand math and language.

We expect the outcome of different dice rolls or coin flips to be independent of each other (the first coin flip does not influence the outcome of the second coin flip, whether the coin is fair or biased), so we can multiply their probabilities together. The probability of getting “heads” or “tails” from a fair coin flip is 1/2. If we got heads three times in a row (h,h,h) the probability of this is ![]() or 12.5%. 1/8 is the likelihood of hhh given that the coin is fair. If the coin were double-sided h/h then the likelihood of hhh is one, 100%.

or 12.5%. 1/8 is the likelihood of hhh given that the coin is fair. If the coin were double-sided h/h then the likelihood of hhh is one, 100%.

The slightly trickier part is calculating the probability when there is a mix of outcomes. Often we don’t care about the order, so Thh, hTh, and hhT are all two heads and one tail. (I am using a capital T for tails to help visualize it, so it doesn’t get lost as easily in the hs; this is not meant to imply that I respect the tails side of the coin more than the heads side.) Each of these individual outcomes has a 1/8 probability of occurring. However, since there are three mutually exclusive ways to get two heads and one tail we add the three individual probabilities together, ![]() or about a 37.5% probability of this outcome. The likelihood of two heads and one tail, given that the probability of h is 1/2 and the probability of t is 1/2 (both add up to one) is 3/8. In statistics, the vertical bar symbol “|” is used to indicate “given”, so we can write

or about a 37.5% probability of this outcome. The likelihood of two heads and one tail, given that the probability of h is 1/2 and the probability of t is 1/2 (both add up to one) is 3/8. In statistics, the vertical bar symbol “|” is used to indicate “given”, so we can write ![]() . This is the probability (

. This is the probability (![]() ) of the data (

) of the data (![]() in any order) given (|) the hypothesis (

in any order) given (|) the hypothesis (![]() ) that the coin is fair. Under our hypothesis, the probability of heads is 1/2,

) that the coin is fair. Under our hypothesis, the probability of heads is 1/2, ![]() . This particular probability,

. This particular probability, ![]() , is known as the likelihood (of the data given the hypothesis).

, is known as the likelihood (of the data given the hypothesis).

There are two directions to go in here. One is looking at the likelihoods under different probabilities, e.g., P(H) = 1/3 or 1/10. Another, which I will do first, is to look some more at the combinations of different outcomes.

When we are looking at a small number of outcomes, it is easy to write down the probabilities. What are all of the probabilities from two coin flips? hh, hT, Th, and TT. All of these individual outcomes have a probability of ![]() . However, getting one heads and one tails in either order, hT or Th, is

. However, getting one heads and one tails in either order, hT or Th, is ![]() . So getting an hT is twice as likely as hh. There are more ways to get a mix of outcomes, so we see a mix of outcomes more often. This is a simple statement, but it has a very deep meaning.

. So getting an hT is twice as likely as hh. There are more ways to get a mix of outcomes, so we see a mix of outcomes more often. This is a simple statement, but it has a very deep meaning.

Let’s go to four coin flips. There is only one way to get hhhh, the probability is ![]() . There are four ways to get three heads and one tail, hhhT, hhTh, hThh, Thhh,

. There are four ways to get three heads and one tail, hhhT, hhTh, hThh, Thhh, ![]() . How many ways are there to get two heads and two tails? hhTT, hThT, ThhT, TThh, ThTh, and hTTh, so 6, with a probability of 6/16 = 3/8. This is starting to get tricky. As the combinations get larger, it is harder to keep track, and we are more likely to make a mistake when writing them down. How many ways are there to get four tails and three heads with seven coin flips? I won’t even try to write it out.

. How many ways are there to get two heads and two tails? hhTT, hThT, ThhT, TThh, ThTh, and hTTh, so 6, with a probability of 6/16 = 3/8. This is starting to get tricky. As the combinations get larger, it is harder to keep track, and we are more likely to make a mistake when writing them down. How many ways are there to get four tails and three heads with seven coin flips? I won’t even try to write it out.

There are two ways to do this. We can calculate the combinations directly using the equation for a binomial coefficient or we can look it up in Pascal’s triangle. I’m going to hold off on Pascal’s triangle because it connects to a lot of cool ideas and is worth its own blog post. The binomial coefficient is written as

![]()

(where ![]() is the total number of coin flips,

is the total number of coin flips, ![]() number that come out heads and

number that come out heads and ![]() is the number that come out Ts) and uses factorials (!, multiplying the integers together down to one, or two because multiplying by one has no effect). This almost looks like

is the number that come out Ts) and uses factorials (!, multiplying the integers together down to one, or two because multiplying by one has no effect). This almost looks like ![]() divided by

divided by ![]() with the dividing bar missing (

with the dividing bar missing (![]() versus

versus ![]() ), but it is not and is a form of writing this down that is reserved specifically for the binomial coefficient.

), but it is not and is a form of writing this down that is reserved specifically for the binomial coefficient. ![]() is read as “n choose h“. It is essentially a bookkeeping equation that keeps track of all the possible ways to divide up the outcomes. This is built into a lot of calculators and programming languages. We can even write “7 choose 3” into the Google search bar and get an answer of 35. There are 35 orders of getting three hs and four Ts in seven coin flips, ThhTThT for example. Plugging this into our equation

is read as “n choose h“. It is essentially a bookkeeping equation that keeps track of all the possible ways to divide up the outcomes. This is built into a lot of calculators and programming languages. We can even write “7 choose 3” into the Google search bar and get an answer of 35. There are 35 orders of getting three hs and four Ts in seven coin flips, ThhTThT for example. Plugging this into our equation

![]()

So, there are 35 ways to flip a coin seven times and get heads three times (and tails four times). We still need to calculate the probability of an individual outcome, ![]() , and add the different possible orders to get it by multiplying by 35. The likelihood of this outcome is

, and add the different possible orders to get it by multiplying by 35. The likelihood of this outcome is ![]() or 27.3%.

or 27.3%.

By the way, you might ask why it is n choose h instead of n choose T. It is arbitrary and doesn’t matter. You can write it down both ways and still get the same answer.

![]()

I can’t resist pointing out that there is also a connection between the behavior of the binomial coefficient as the number of coin flips gets larger and the normal or Gaussian distribution, also known as the bell curve. However, to really talk about this will be a separate post. One cool thing about mathematics, and many fields when you get deep enough into it, is that different ideas start to connect to each other, sometimes in surprising ways, that give you a deeper understanding of the processes involved.

The general likelihood equation we are using is

![]() .

.

In the case of fair coin flips ![]() , but this also works in cases that are unequal. The probability of a “one” coming up when rolling a die is 1/6. The probability it is not one is 5/6. If we roll a die seven times (or seven dice once) the probability of three ones and four “not ones” is

, but this also works in cases that are unequal. The probability of a “one” coming up when rolling a die is 1/6. The probability it is not one is 5/6. If we roll a die seven times (or seven dice once) the probability of three ones and four “not ones” is

![]() .

.

So, to wrap this part up, you can multiply and add probabilities together to calculate the likelihood of a particular outcome (in this case flipping coins or rolling dice) when there are two possible outcomes of each event (heads or tails, or fixing eggs for breakfast or not). (More than two outcomes is not that different but will be a separate post.) You can also double-check the calculations to see that the sum of all possible outcomes adds up to 1 or 100%.

The way to really see this and understand the probabilities is to, rather than only passively reading about examples, make up a simple question, draw it out on a piece of paper, and go through the calculation yourself a couple of times to get comfortable with multiplying and adding probabilities together.

Links

- A discussion of the derivation of the binomial coefficient, which I did not go into here but came across and am sharing if you are interested, https://math.stackexchange.com/questions/119480/derivation-of-binomial-coefficient-in-binomial-theorem

Media

- Coin flipping image from Pietiäinen (1918) Tasavallan Presidentit, https://commons.wikimedia.org/wiki/File:St%C3%A5hlberg_flipping_coin2.jpg

- Dice image, Rhetos (2021), https://commons.wikimedia.org/wiki/File:W%C3%BCrfelschauer.jpg

Leave a Reply